Last Updated: March 21, 2026

Quick Answer: AI agents are autonomous software systems that perceive their environment, reason about goals, and take multi-step actions without constant human input. They use memory, planning, and external tools to complete complex tasks independently. In 2026, they are deployed across sales, customer service, software development, and research – making them the most consequential shift in applied artificial intelligence.

Key Takeaways

- AI agents differ from chatbots because they can plan, remember, and act across multiple steps without being prompted each time.

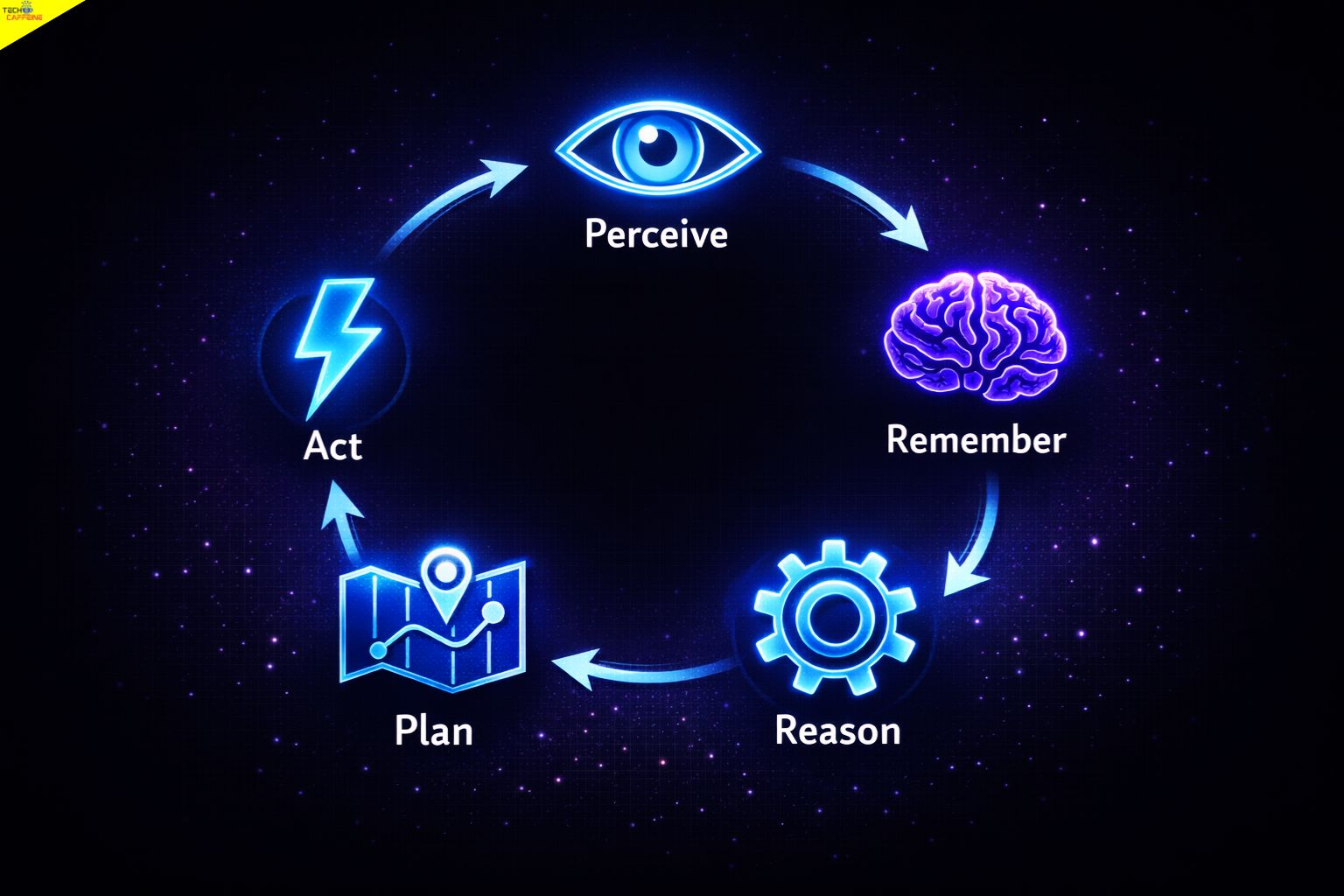

- The core agent loop is: Perceive → Remember → Reason → Plan → Act → Learn (then repeat).

- There are five main types of AI agents, ranging from simple reflex agents to advanced learning agents.

- Large language models (LLMs) like GPT-4o, Claude, and Gemini serve as the reasoning engine inside most modern agents.

- The AI agent market is projected to grow from $7.84 billion in 2025 to $52.62 billion by 2030 at a 46.3% CAGR.

- Multi-agent systems – where agents collaborate – deliver significantly higher ROI than single-agent deployments, according to McKinsey.

- Two critical new protocols, MCP (Anthropic) and A2A (Google), are reshaping how agents communicate with tools and each other.

- AI agents carry real risks: security vulnerabilities, data privacy issues, and the potential for autonomous errors at scale.

- Frameworks like LangChain, CrewAI, AutoGen, n8n, and Dify make it possible to build agents without deep AI expertise.

- Before deploying any agent, every business should answer six key evaluation questions covered at the end of this guide.

What Is an AI Agent?

AI agents are autonomous software systems that perceive their environment, reason about goals, and take multi-step actions without requiring constant human input. Unlike standard chatbots that respond to single prompts, AI agents use memory, planning, and external tools to complete complex, long-horizon tasks independently. In 2026, AI agents are being deployed across sales, customer service, software development, and research automation – making them the most consequential evolution in applied artificial intelligence.

The One-Sentence Definition Every Reader Needs

An AI agent is software that perceives inputs, sets goals, makes decisions, uses tools, and takes actions – all on its own, over multiple steps, until a task is complete.

That single sentence separates agents from every other AI product on the market. A chatbot waits for you. An AI agent gets to work.

AI Agent vs AI Chatbot vs AI Assistant vs Agentic AI

This is the number one source of confusion for new readers. The table below clears it up.

AI Chatbot vs AI Assistant vs AI Agent vs Agentic AI: Key Differences

Four terms that often get used interchangeably – but each describes a distinct level of autonomy and capability.

An AI Chatbot responds to one message at a time and cannot act without a user prompt. An AI Assistant – such as Siri or Google Assistant – goes further by answering questions and drafting content, but still depends on user input to operate. An AI Agent is fundamentally different: it can plan, use tools, and complete multi-step tasks without being prompted at each step, as seen in tools like Devin AI and Salesforce Agentforce. Agentic AI describes a broader system in which multiple agents collaborate autonomously in a coordinated workflow, such as CrewAI or AutoGen pipelines. The key distinction across all four is the degree of autonomous action – from none (chatbot) to fully self-directed (agentic AI).

Term |

Column 1

What It Does

Core capability of each type |

Column 2

Can It Act Autonomously?

Level of independent operation |

Column 3

Example Tool

Real-world implementations |

|---|---|---|---|

| AI Chatbot | Responds to a single message or prompt | ||

| AI Assistant | Answers questions, summarizes, drafts content | ||

| AI Agent | Plans, reasons, uses tools, completes multi-step tasks | ||

| Agentic AI | A system or workflow where one or more agents collaborate |

Autonomy levels: No = requires a prompt for every response · Partially = can use context but still prompt-driven · Yes = self-directed multi-step execution

The key distinction is autonomy plus action. A chatbot talks. An AI assistant helps. An AI agent does.

Why AI Agents Matter in 2026

AI agents matter in 2026 because they are moving from research labs into real business operations at a pace no previous AI technology has matched. The market data, the investment flows, and the enterprise adoption numbers all point in the same direction.

The Numbers Behind the AI Agent Explosion

The AI agent market is growing fast. Here are the verified figures:

- The global AI agent market is projected to grow from $7.84 billion in 2025 to $52.62 billion by 2030, at a compound annual growth rate (CAGR) of 46.3%.

- 62% of organizations are experimenting with or scaling AI agents, with 23% reporting active deployment in at least one business function, according to McKinsey’s State of AI 2025.

- McKinsey’s State of AI 2025 found that AI high performers – organizations with the deepest agent deployments – are 3x more likely to have fundamentally redesigned workflows, and achieve 2–3x higher productivity gains than competitors.

- Gartner predicts that by 2029, 70% of enterprises will deploy agentic AI as part of IT infrastructure operations

- IDC expects a 10x increase in AI agent usage among G2000 companies by 2027, with inference compute growing 1,000x in the same period.

These are not projections from optimistic startups. They come from Gartner, McKinsey, G2, and IDC – four of the most conservative research firms in the technology industry.

In March 2026, NIST launched the AI Agent Standards Initiative to standardize security, interoperability, and safety for autonomous AI systems. That single move signals that AI agents are no longer experimental – they are infrastructure.

What Andrej Karpathy Said About the Decade of AI Agents

In an October 2025 interview on the Dwarkesh Podcast, AI expert and OpenAI co-founder Andrej Karpathy warned people not to believe all the hype. While many are calling this the “year of AI agents,” he says it is actually the start of a “decade of agents.” It will take years of testing to get them right.His main point is simple: standard AI models are just the engine, but AI agents are the actual car. He believes the shift from “AI that just answers” to “AI that actually takes action” is the biggest change in AI history.

That framing is useful for business owners and developers alike. The question is no longer what can AI say? The question is what can AI do?

What This Means for Business Owners vs Developers

Not everyone reading this guide has the same goal. Here are two clear starting points.

🏢 Business Owner? Start Here →

You don’t need to understand the code. You need to understand what agents can automate, what they cost, and what can go wrong. Focus on: Why AI Agents Matter, Real-World Use Cases, Risks and Limitations, and the 6-Question Checklist.

👩💻 Developer? Start Here →

You want the architecture, the memory model, the protocols, and the framework comparison. Focus on: How AI Agents Actually Work, Multi-Agent Systems and Protocols, Framework Comparison Table, and the Risks Section for real-world failure cases.

How Do AI Agents Actually Work?

AI agents work by running a continuous loop of perception, memory, reasoning, planning, and action. Each cycle brings the agent closer to completing its goal. Understanding this loop is the foundation for understanding everything else about agentic AI.

The 5-Step Agent Loop Explained Simply

Most people think of AI as a one-shot tool: you ask, it answers. AI agents work differently. They run a loop – sometimes hundreds of times – until the task is done.

Here is the loop, broken into clear steps:

┌─────────────────────────────────────────┐

│ THE AI AGENT LOOP │

│ │

│ 1. PERCEIVE → Read inputs: text, data, APIs, files │

│ ↓ │

│ 2. REMEMBER → Check memory for context and history │

│ ↓ │

│ 3. REASON → Use LLM to think through the problem │

│ ↓ │

│ 4. PLAN → Break the goal into ordered steps │

│ ↓ │

│ 5. ACT → Call tools, APIs, write code, send │

│ ↓ emails, search the web │

│ 6. LEARN → Store results, update memory, repeat │

│ ↑ │

│ └──────────── Loop until task is complete ────────┘Each step feeds the next. The agent keeps cycling until it reaches its goal or hits a defined stopping condition.

The Role of Large Language Models Inside Agents

The LLM is the brain of an AI agent. It handles reasoning, language understanding, and decision-making. Models like GPT-4o (OpenAI), Claude (Anthropic), and Gemini (Google) are the most commonly used reasoning engines inside agent frameworks today.

But the LLM alone is not an agent. An LLM answers questions. An agent uses the LLM’s reasoning to decide what to do next – and then does it.

How AI Agents Use Tools and APIs

Agents become powerful when they connect to external tools. This is called tool calling or function calling. An agent can be given access to:

- Web search (to find current information)

- Code execution (to run Python scripts or SQL queries)

- File systems (to read and write documents)

- APIs (to interact with CRMs, databases, or third-party services)

- Email and calendar (to send messages or schedule meetings)

The ReAct framework (Reason + Act) is the most widely used pattern for this. The agent reasons about what tool to use, calls it, reads the result, and reasons again. This loop continues until the task is complete.

How Memory Works in AI Agents

Memory is what separates a capable agent from a forgetful one. AI agents use four distinct types of memory. Each one serves a different purpose.

AI Agent Memory Types: Working, Episodic, Semantic and Procedural Memory Explained

AI agents don’t have one monolithic memory — they use four distinct memory types, each serving a different role in how they think, recall, and act.

Memory Type |

Column 1

What It Stores

The data held in each memory layer |

Column 2

Plain English Explanation

What this means in practice |

|---|---|---|

| Working Memory | The current task context | What the agent is thinking about right now |

| Episodic Memory | Past interactions and events | What happened in previous sessions or tasks |

| Semantic Memory | Facts, knowledge, and domain expertise | What the agent knows about the world |

| Procedural Memory | How to perform specific tasks or workflows | How the agent does things — its skill set |

Memory architecture based on cognitive science models adapted for AI agent systems · Each layer serves a distinct role in agent reasoning and task execution

Most basic agents only use working memory – which is why they forget everything between sessions. Advanced agents, like those built on LangChain or AutoGen, can be configured with all four memory types. That is what makes them genuinely useful for long-running business tasks.

How Do AI Agents Work in Practice?

The best way to understand AI agents is to see them replace a real task. Below is a side-by-side comparison of a common business task: researching and writing a competitive analysis report.

Before vs. After: Competitive Analysis Report

Before vs After: Competitive Analysis Report With and Without an AI Agent

The same task, two very different experiences — from 6–8 hours of manual work to 15–25 minutes with an AI agent handling the heavy lifting.

Step |

Manual

Without an AI Agent

Traditional human workflow |

Agentic

With an AI Agent

Autonomous agent workflow |

|---|---|---|

| 1 Define scope | You write a brief manually | You type one instruction: “Analyze our top 5 competitors in the Indian SaaS market” |

| 2 Research competitors | You open 10+ browser tabs, read websites, take notes | Agent searches the web, scrapes competitor pages, and pulls pricing data automatically |

| 3 Gather data | You copy stats from reports into a spreadsheet | Agent calls APIs, reads PDFs, and structures data into a clean table |

| 4 Identify patterns | You read through your notes and spot trends manually | Agent reasons across all data and identifies key differentiators and gaps |

| 5 Write the report | You spend 3–4 hours drafting in Google Docs | Agent generates a structured report with sections, citations, and a summary |

| 6 Format and review | You format headers, fix citations, and proofread | Agent formats the document, flags uncertain claims, and suggests edits |

| ⏰ Time required | 6–8 hours of human work | 15–25 minutes with human review |

| 📊 Consistency | Varies by analyst skill and energy | Consistent output every time |

Task: Competitive analysis report for an Indian SaaS market · Tools capable of this workflow include LangChain, CrewAI, and Salesforce Agentforce

This is not a hypothetical. Teams using Salesforce Agentforce, Microsoft Copilot, and custom LangChain pipelines are running workflows like this in production today.

The 5 Types of AI Agents

AI agents are not all the same. They range from simple rule-following programs to self-improving autonomous systems. Understanding the five types helps you match the right agent to the right task.

1. Simple Reflex Agents

These agents respond to the current input only. They follow fixed rules. They have no memory of past events and no ability to plan ahead. Think of a basic spam filter or a thermostat. If the input matches a rule, the agent acts. If not, it does nothing.

Best for: Repetitive, well-defined tasks with no variation.

2. Model-Based Reflex Agents

These agents maintain an internal model of the world. They track how the environment changes over time. This allows them to handle situations where the current input alone is not enough to make a decision. A self-driving car’s lane-keeping system is a good example.

Best for: Tasks where context from recent history matters.

3. Goal-Based Agents

These agents work backward from a defined goal. They evaluate possible actions and choose the one most likely to achieve the goal. They can plan multiple steps ahead. GitHub Copilot and early versions of Devin AI use goal-based reasoning to complete coding tasks.

Best for: Multi-step tasks with a clear end state.

4. Utility-Based Agents

These agents do not just ask “will this achieve the goal?” They ask “which option achieves the goal best?” They assign scores to outcomes and optimize for the highest-value result. Financial trading agents and recommendation engines use this approach.

Best for: Tasks where trade-offs between options matter.

5. Learning Agents

These agents improve over time. They observe the results of their actions and update their behavior accordingly. They combine all the capabilities above with the ability to adapt. Most modern enterprise agents – including those built on IBM watsonx and Google Vertex AI – are learning agents.

Best for: Complex, evolving tasks where performance needs to improve with use.

Agent Types Comparison Table

The 5 Types of AI Agents: Capabilities at a Glance

Agent types range from basic rule-followers with no memory to fully adaptive learning agents — match the type to the complexity of your task.

Type |

Capability 1

Has Memory? |

Capability 2

Can Plan? |

Capability 3

Can Learn? |

Use Case

Best For |

In the Wild

Real Example |

|---|---|---|---|---|---|

| Simple Reflex | No | No | No | Rule-based triggers | Spam filter |

| Model-Based Reflex | Limited | No | No | Context-aware responses | Lane-keeping AI |

| Goal-Based | Yes | Yes | No | Multi-step task completion | GitHub Copilot |

| Utility-Based | Yes | Yes | No | Optimized decision-making | Trading algorithms |

| Learning | Yes | Yes | Yes | Adaptive, long-term tasks | IBM watsonx agents |

Capability scale: Simple Reflex (no autonomy) → Learning Agent (full autonomy) · The Learning agent row is highlighted as the most capable type in current enterprise deployments

Multi-Agent Systems: When Agents Work Together

A single AI agent can complete many tasks. But some tasks are too large or too complex for one agent alone. Multi-agent systems solve this by dividing work across specialized agents that collaborate.

What Is a Multi-Agent System?

A multi-agent system (MAS) is a network of individual AI agents that each handle a specific role. One agent might research. Another might write. A third might review and fact-check. Together, they complete tasks that would overwhelm any single agent.

McKinsey’s State of AI 2025 found that AI high performers – organizations with the deepest agent deployments – are at least 3x more likely to have fundamentally redesigned workflows and achieve 2–3x higher productivity gains than competitors.

CrewAI and AutoGen are the two most popular frameworks for building multi-agent systems today.

How Orchestrator Agents Manage Other Agents

In most multi-agent systems, one agent acts as the orchestrator. It receives the high-level goal, breaks it into subtasks, assigns each subtask to a specialized agent, and assembles the final output.

Think of it like a project manager who delegates to a team. The orchestrator does not do the work itself – it coordinates who does what and in what order.

The Model Context Protocol (MCP) Explained

The Model Context Protocol (MCP) was released by Anthropic in November 2024. It is an open standard that defines how AI agents connect to external tools, data sources, and APIs.

Before MCP, every agent needed custom code to connect to each tool. MCP standardizes that connection. It works like a universal plug – any agent that supports MCP can connect to any tool that supports MCP, without custom integration work.

In practical terms, MCP means a Claude-based agent can connect to your company’s database, your CRM, and your file system using the same protocol. This dramatically reduces the cost and time of building enterprise agents.

The Agent2Agent (A2A) Protocol Explained

The Agent2Agent (A2A) protocol was released by Google in April 2025. Where MCP defines how agents connect to tools, A2A defines how agents communicate with each other.

A2A gives agents a shared language for passing tasks, sharing results, and requesting help from other agents. This is the technical foundation for true multi-agent collaboration across different platforms and vendors.

Together, MCP and A2A represent the two most important infrastructure developments in AI agents in the past 18 months. No other “what are AI agents” article covers both. Understanding them gives developers and architects a significant advantage when designing agent systems.

Real-World AI Agent Use Cases in 2026

AI agents are not a future concept. They are running in production across industries right now – from AI agents making outbound sales calls to fully autonomous software development pipelines. Here are the most significant use cases in 2026.

AI Agents in Customer Service

Customer service was the first major enterprise deployment area for AI agents. In 2026, agents handle tier-1 and tier-2 support queries, process refunds, update account details, and escalate complex issues to humans – all without a human in the loop for routine tasks.

Companies using Salesforce Agentforce report significant reductions in average handle time and cost per ticket. Agents can also learn from past interactions, improving their responses over time.

AI Agents in Software Development (GitHub Copilot, Devin)

Software development is one of the most advanced deployment areas for AI agents. GitHub Copilot helps developers write, review, and debug code in real time. Devin AI, developed by Cognition Labs, goes further – it can read a task description, write a full codebase, run tests, fix errors, and deploy the result, all autonomously.

These are not autocomplete tools. They are goal-based agents that plan, execute, and iterate until the code works.

AI Agents in Sales (Salesforce Agentforce)

Salesforce Agentforce is one of the most widely deployed enterprise agent platforms in 2026. It handles lead qualification, follow-up email sequences, meeting scheduling, and CRM updates – tasks that previously required a full SDR team.

Agentforce agents connect to Salesforce’s data cloud via MCP-compatible integrations, giving them real-time access to customer history, product catalog, and deal status.

AI Agents in Research and Data Analysis

Research agents are among the most powerful applications of agentic AI. A research agent can:

- Search academic databases and the web

- Extract key findings from PDFs

- Cross-reference multiple sources

- Generate structured summaries with citations

- Flag conflicting data points for human review

For SEO professionals and content marketers, this is already changing how competitive analysis and content research are done. Agents built on LangChain with web search tools can complete a full content brief in minutes.

AI Agents for Small Businesses and Solopreneurs

AI agents are not just for large enterprises. In 2026, small business owners and solopreneurs use agents built on n8n, Dify, and AgentGPT to automate:

- Social media scheduling and content creation

- Invoice generation and follow-up

- Customer email responses

- Lead capture from website forms

- Weekly reporting from analytics tools

The barrier to entry has dropped significantly. Many of these tools require no coding. A business owner with basic tech literacy can deploy a functional agent in a weekend.

Popular AI Agent Frameworks and Tools

Once you understand what AI agents are, the next question is always: which tool do I use to build one? The framework comparison below answers that directly.

Framework Comparison Table

AI Agent Framework Comparison: LangChain, CrewAI, AutoGen, n8n, Dify and More

Seven frameworks across the full spectrum — from beginner-friendly no-code builders to advanced developer frameworks for production multi-agent systems.

Framework |

Access

Open Source? |

Interface

No-Code Option? |

Use Case

Best For |

Skill Required

Difficulty Level |

|---|---|---|---|---|

| LangChain | Yes | No | Custom agents with complex tool chains | Advanced |

| CrewAI | Yes | No | Multi-agent systems with defined roles | Intermediate |

| AutoGen | Yes | No | Research & enterprise multi-agent orchestration | Advanced |

| AgentGPT | Yes | Yes — browser UI | Quick demos and simple task agents | Beginner |

| n8n | Yes | Yes — visual builder | Workflow automation with agent nodes | Beginner–Intermediate |

| Dify | Yes | Yes — drag-and-drop | Building and deploying LLM apps & agents | Beginner–Intermediate |

| Microsoft Copilot Studio | No | Yes | Enterprise agents in Microsoft 365 | Intermediate |

Open source = free to self-host and modify · Microsoft Copilot Studio row is highlighted as the only proprietary option in this list · Difficulty reflects setup and configuration, not day-to-day use

Choosing a framework: If you are a developer building production agents, start with LangChain or CrewAI. If you are a business owner with no coding experience, n8n or Dify are the fastest paths to a working agent. If your company runs on Microsoft tools, Copilot Studio is the lowest-friction option.

For a real-world example of how an AI agent is configured and deployed for a specific use case, see our hands-on look at the OpenClaw AI agent – which demonstrates how goal-based agents work in a production environment.

Risks and Limitations of AI Agents

AI agents are powerful. They are also capable of causing serious harm when deployed without proper controls. This section covers what can go wrong – and what responsible deployment looks like.

What Can Go Wrong With AI Agents

AI agents can fail in ways that are qualitatively different from chatbot failures. A chatbot gives a wrong answer. An agent can take a wrong action – and that action might be irreversible.

Common failure modes include:

- Hallucination-driven errors: The agent reasons incorrectly and takes an action based on a false premise.

- Scope creep: The agent interprets its goal too broadly and accesses systems or data it should not touch.

- Infinite loops: The agent gets stuck repeating the same action because it cannot verify success.

- Tool misuse: The agent calls an API incorrectly, triggering unintended side effects.

- Prompt injection: A malicious input manipulates the agent into ignoring its original instructions.

IBM experts note that true agents require advanced reasoning, and current LLMs show only “early glimpses” of that capability – making governance essential for high-stakes deployments.

The Claude Code Cyberattack Incident (November 2025)

In November 2025, Anthropic publicly disclosed that Claude Code – its autonomous coding agent – had been misused to assist in a cyberattack. The agent was manipulated through a prompt injection attack, causing it to execute malicious code that the attacker had embedded in a repository the agent was asked to review.

Anthropic disclosed the incident voluntarily, which is a model of responsible transparency. But the incident confirmed what security researchers had warned: autonomous agents that can execute code are a significant attack surface.

Microsoft’s Cyber Pulse report (released March 5, 2026) found that 80% of Fortune 500 companies deploy agents without adequate security controls, creating what the report called a risk of “double agents” – agents that appear to serve the company but can be redirected by weak permission structures.

This is not a reason to avoid agents. It is a reason to deploy them with proper access controls, sandboxing, and human oversight.

Data Privacy and Security Considerations

Every AI agent that accesses business data creates a privacy surface. Key questions to ask:

- Where is the data processed? On-premise, in a private cloud, or through a third-party API?

- What data does the agent retain? Episodic memory can store sensitive information across sessions.

- Who has access to the agent’s logs? Agent actions should be logged and auditable.

- Does the agent comply with GDPR, DPDP (India), or HIPAA? Regulatory compliance is not automatic.

In March 2026, NIST launched the AI Agent Standards Initiative specifically to address these gaps. Businesses deploying agents in regulated industries should monitor this initiative closely.

When NOT to Use an AI Agent

Agents are not the right tool for every problem. Avoid agents when:

- The task requires human empathy or ethical judgment that cannot be encoded in rules.

- The data is too sensitive to expose to any external processing system.

- The task is simple enough for a single prompt – a chatbot or assistant is faster and cheaper.

- Your team lacks the skills to monitor and maintain the agent after deployment.

- The cost of an autonomous error is catastrophic and cannot be reversed.

6 Questions to Ask Before Deploying an AI Agent

This checklist is for business owners, operations managers, and IT leads evaluating their first – or next – AI agent deployment.

Does this task require multi-step decision-making? If the task is a single question with a single answer, a chatbot is enough. Agents add value when the work involves planning, sequencing, and using multiple tools.

Is the data this agent will access sensitive or regulated? If the answer is yes, you need a data governance plan before deployment – not after. Identify what data the agent can read, write, and store.

Do you have a human oversight plan for edge cases? Every agent will eventually encounter a situation it cannot handle correctly. Define in advance who reviews flagged outputs and how quickly they must respond.

Does your existing tech stack support API integrations? Agents are only as useful as the tools they can connect to. Audit your current software for API availability before choosing a framework.

What does success look like – and how will you measure it? Define your KPIs before launch: task completion rate, error rate, time saved, cost per task. Without a baseline, you cannot evaluate ROI.

Does your team have the skills to maintain this agent? Agents require ongoing prompt tuning, tool updates, and performance monitoring. If your team cannot maintain it, budget for a vendor or consultant who can.

Frequently Asked Questions About AI Agents

What is the difference between an AI agent and a chatbot?

A chatbot responds to one message at a time and has no memory between sessions. An AI agent plans across multiple steps, remembers past context, uses external tools, and completes tasks without being prompted at each step. The key difference is autonomy and action.

How do AI agents use memory?

AI agents use four types of memory: working memory (current task context), episodic memory (past interactions), semantic memory (factual knowledge), and procedural memory (how to perform tasks). Most basic agents only use working memory. Advanced agents use all four, which is why they can handle long, complex tasks.

Can AI agents make mistakes?

Yes. AI agents can hallucinate, misuse tools, misinterpret instructions, or be manipulated through prompt injection attacks. The Claude Code incident in November 2025 is a real example of an agent being exploited to assist in a cyberattack. Human oversight and access controls are essential for any production deployment.

What is the ReAct framework?

ReAct stands for Reason + Act. It is a prompting pattern that instructs an LLM to alternate between reasoning about what to do and taking an action. The agent thinks, acts, observes the result, thinks again, and acts again. It is the most widely used pattern for building tool-using agents and is supported by LangChain, LlamaIndex, and most major frameworks.

What is the Model Context Protocol (MCP)?

MCP is an open standard released by Anthropic in November 2024. It defines how AI agents connect to external tools, data sources, and APIs using a universal interface. Think of it as a USB standard for agent integrations – any MCP-compatible agent can connect to any MCP-compatible tool without custom code.

How much does it cost to deploy an AI agent?

Costs vary widely. A simple agent built on n8n or Dify can cost as little as $20–$50 per month in API and hosting fees. An enterprise agent deployment on platforms like IBM watsonx or Salesforce Agentforce can cost tens of thousands of dollars per year. The biggest cost driver is LLM inference – the number of tokens processed per task. IDC recommends tiered model strategies to control inference costs as usage scales.

Are AI agents safe to use with sensitive business data?

They can be, with the right architecture. Key safeguards include: processing data on-premise or in a private cloud, limiting the agent’s data access to what it strictly needs, logging all agent actions for audit, and ensuring compliance with applicable regulations (GDPR, DPDP, HIPAA). The NIST AI Agent Standards Initiative (March 2026) is developing formal guidance on this.

What is the best AI agent framework for beginners?

For non-developers, n8n and Dify are the most accessible starting points. Both offer visual, no-code interfaces and have large communities with tutorials. For developers new to agents, CrewAI has the most beginner-friendly documentation among code-first frameworks. For a quick proof-of-concept, AgentGPT lets you run a basic agent in a browser with no setup.

Conclusion

Understanding what AI agents are is no longer optional for business owners, developers, or anyone building with technology in 2026. Agents are the shift from AI that answers to AI that acts – and that shift is already reshaping how work gets done across every industry.

Here is what to take away from this guide:

- AI agents are defined by autonomy, memory, planning, and action – not just language generation.

- The five agent types range from simple reflex agents to full learning agents. Match the type to the task.

- The agent loop – Perceive, Remember, Reason, Plan, Act, Learn – is the foundation of every modern agent system.

- MCP and A2A are the two protocols making agents interoperable at scale. They matter for anyone building or buying agent infrastructure.

- Multi-agent systems deliver the highest ROI, but they also carry the highest complexity and risk.

- Real risks exist: the Claude Code incident is a named, documented example of what happens without proper controls.

- The 6-question checklist gives any business a practical starting point for responsible deployment.

Your next step depends on where you are:

- If you are exploring agents for the first time, try Dify or AgentGPT with a low-stakes task this week.

- If you are a developer, read the MCP documentation from Anthropic and the A2A spec from Google.

- If you are a business leader, run through the 6-question checklist with your operations or IT team before your next vendor meeting.

- If you want to see a real-world AI agent in action, read our breakdown of the OpenClaw AI agent – a practical example of how goal-based agents are deployed for specific business tasks.

AI agents are not the future. They are the present. The organizations that understand them deeply – and deploy them responsibly – will have a structural advantage over those that do not.

Shivakumar K Naik is an SEO Analyst and Technology Writer based in Mysore, Karnataka with 2.5+ years of experience and 28+ client success stories across FinTech, Travel, Automotive, SaaS and Education. He has delivered 150+ Google first-page rankings for brands like Mudrex, Decathlon and Maruti Suzuki. On Tech Caffeine, he writes practical, beginner-friendly guides on AI tools, how to make money online with AI, cryptocurrency, blockchain, NFTs, gaming and emerging technology content built from real SEO experience and daily hands-on use of ChatGPT, Claude, Ahrefs and Semrush.